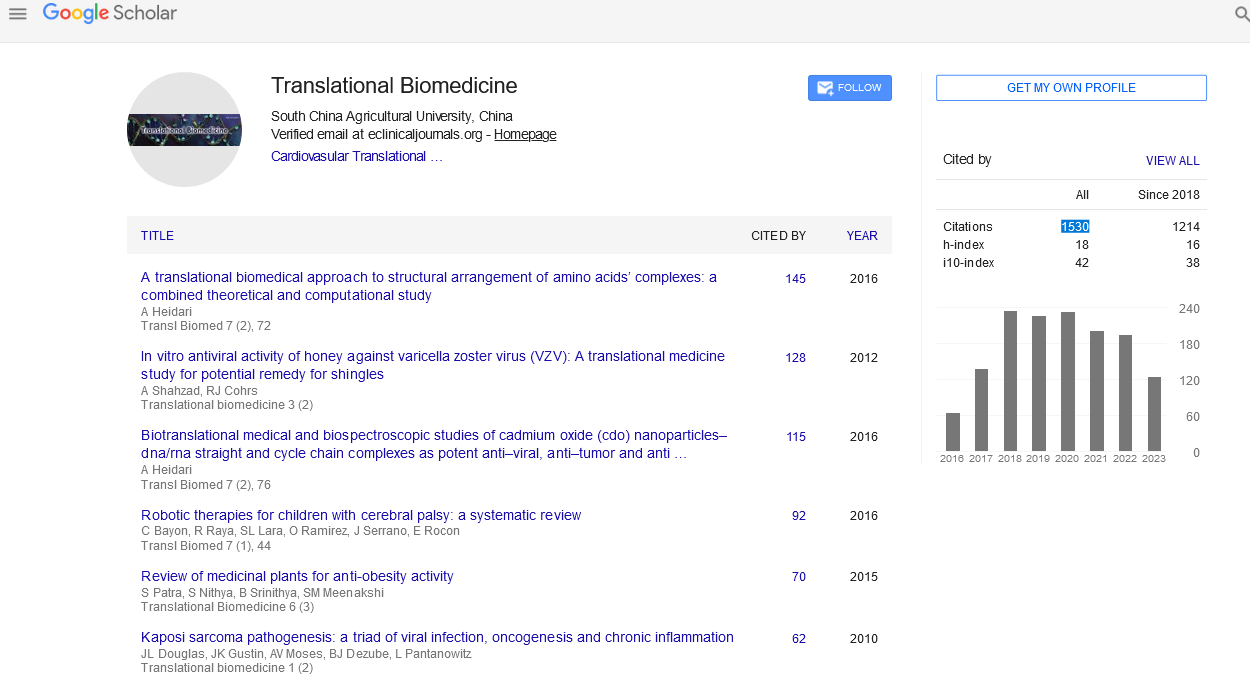

Mini Review - (2022) Volume 13, Issue 6

Parkinson’s Disease by Translational Informatics

Alina Trifan*

1Department of Laboratory Medicine, Yale University, USA

*Correspondence:

Alina Trifan, Department of Laboratory Medicine, Yale University,

USA,

Email:

Received: 02-Jun-2022, Manuscript No. Iptb-22-12880;

Editor assigned: 08-Jun-2022, Pre QC No. PQ -12880;

Reviewed: 22-Jun-2022, QC No. Iptb-12880;

Revised: 27-Jun-2022, Manuscript No. Iptb-22-12880 (R);

Published:

01-Jul-2022, DOI: 10.21767/ 2172-0479.100236

Introduction

The prevalence and mortality of a prevalent neurological condition that affects elderly individuals are rising as the world population ages. Huge data-based identification of minute actionable changes is replacing the conventional biomedical research paradigm of going from little data to big data. We cover big data and informatics for the translation of fundamental PD research to clinical applications in order to emphasise the use of big data for precision PD therapy. We highlight several major findings in clinically applicable alterations, such as susceptibility genetic variations for PD risk population screening, biomarkers for the diagnosis and stratification of PD patients, risk factors for PD, and lifestyles for PD prevention. The growing older population is transforming overall disease spectrum. Alzheimer's disease and Parkinson's disease are two elderly diseases with rising morbidity and mortality rates worldwide [1]. Due to a lack of adequate medical and labour resources, this societal burden of care of elderly individuals is becoming really difficult [2]. Economic and social growth would be hampered by the lack of medical care resources and the ageing society's rising demand.

PD is one of the NDDs that affect elderly people the most frequently. The most common mobility disorder, PD typically progresses relatively slowly, however in later years it may speed up [3]. From the onset of neurodegeneration to the appearance of prodromal signs along the way and the presentation of typical clinical manifestations of the disease, it can take more than 20 years [4]. Currently, more than 87,500 records of PD research can be found in the PubMed database when search with the terms "Parkinson's disease OR Parkinson disease". However, the molecular cause and mechanism of Parkinson's disease are still unknown.

Because prompt detection and prevention of PD can lessen social demands and family hardship, they are preferred to severe clinical treatment of the disease [5]. Before any potential translated version, many fundamental questions regarding PD studies still need to be responded, including the identification of biomarkers for individualised patient diagnosis and stratification, the identification of genetic or environmental factors for the screening of populations that are particularly susceptible, and the identification of a healthy lifestyle to support individualised healthcare for the elderly [6].

Modeling the intricate relationships requires enough data and knowledge of the genotypes and somatic manifestations of the various subtypes of PD in order to understand the molecular mechanism PD and provide answers to the concerns raised above. Biotechnologies, especially high sequencing technology, have advanced quickly in recent decades. The cost of wholegenome sequencing has dropped from hundreds of millions to a few hundred dollars, while deep sequencing for genomic architecture, gene expression, and epigenetic markers is getting less expensive [7]. Additionally to the physiological data obtained from multiple wearable sensors, the biological data discovered by point-of-care tests, and the medical imaging data, such as magnetic resonance imaging and positron emission tomography computed tomography are all growing at an unprecedented rate. Our current era is one of big information and digital medicine. The large number of data includes both preclinical and clinical data from patients as well as data from healthy individuals. In recent decades, biotechnologies, in particular high sequencing technology, have advanced quickly [8]. Whole-genome sequencing has become more affordable, going from costing hundreds of millions of dollars to a few hundred, while deep sequencing for genomic architecture, gene expression, and epigenetic markers is also becoming more affordable [9]. The amount of biological information discovered by point-of-care tests, medical imaging data, such as magnetic resonance imaging, and positron emission tomography computed tomography, is all growing at an unprecedented rate, in addition to the physiological information gathered from numerous wearable sensors. Modern medicine uses digital technology and large data [10]. Both preclinical and clinical data from patients as well as data. From little data to big data is frequently the typical paradigm of translational research for the discovery of prognostic biomarker or risk factors. It begins with a hypothesis-driven investigation of the bioactivities of a small subset, proteins, or other biological molecules, followed by tests of their biological roles and medical functions, trying to move from cell lines, animal models, and a small number of patients to large population validation. Because the features or discoveries derived from small data do not necessarily work well in a big and diverse data space, biomarker and or prescription discoveries frequently fail in last-phase studies.

Discussion

The paradigm for biomedical research is changing today, moving away from big data and toward tiny data. Separating minor, actionable changes from larger ones. PD biological data integration across levels and in real time Physiological signals may also be a type of phenotype. There are many layers between genotype and the phenotype of PD clinical symptoms, including the molecular and cellular phenotypes. As a result, the connection between a patient's genotype and clinical phenotype is extremely complicated. Most of the PD data and information are now dispersed across all of these levels and need to be connected and integrated. These data can be arranged in the temporal dimension according to pathogenesis and progression. For correlation studies, data at these many levels are typically statistically averaged and justified; nevertheless, these techniques frequently average the patterns in subgroups of the examined samples. To accurately model the disease systems, paired data for every level between genotype and disease phenotype will be necessary. If the paired data are gathered as a time series, then the course and trajectory of PD might be predicted. In order to achieve crosslevel and dynamic integration of PD data, a typical paradigm that could be applied to PD data integration in the future is the Cancer Genome Atlas for cancer research. When small data are used for PD modelling, some complicated patterns cannot be represented in this limited data space; as a result, when a model trained from a small data set is applied to a huge data space, the model will probably not work. identification of important players based on mechanisms and comprehensive, systems-level modelling We can only partially address the complex PD "elephant" in the absence of adequate data for modelling PD causation and progression. To comprehend the complexity and heterogeneity of the PD process, a holistic and systems-level characterisation is required. Future PD translational informatics will have a problem in identifying essential participants at the systems level, such as biomarkers for PD classification and risk factors for high-risk population screening. The goals of big data-based modelling will be to find systems-level genes, pathways, modules, or sub-networks that drive systems to move from a healthy to a disease state as opposed to traditional disease-gene recognition. Dynamical PD progression modelling and systems-level PD progression control the cause and course of PD are dynamically altered because complex PD is a result of a dynamic interplay between the patient's genetics, environment, and lifestyle. Modeling the dynamic evolution of PD is possible with dynamic information from the human body, such as routine blood tests and the real-time collection of physiological signals.

Conclusion

While identifying the important hubs and connections in these dynamic systems will be difficult, it will provide an opportunity for rational drug design or lifestyle changes to control the development of PD. Technology, science, and societal factors all play a role in the translational informatics study of PD. Technically, Direct-to-consumer point-of-care testing may be used to perform clinical laboratory tests with ease. Wearable sensors, smart phones, and cloud computing may all work together to identify physiological signs in real time. Healthy people, family members, nurses, doctors, and data analysts might all be connected via the internet in the cloud to handle the data in a crowdsourcing paradigm. In terms of science, the interactions between genetics, way of life, physiological signals, microbiota, and environment are deepening our understanding of PD. Systems biology and evolutionary medicine are now the paradigm for studying these interactions in order to better understand complex diseases like cancer and NDDs, including PD. In terms of social and economic factors, an ageing population and the high cost of clinical PD management necessitate greater PD prevention and prediction, and all governments are encouraging the healthcare market, particularly for senile diseases like AD and PD. Translational informatics for PD studies will provide many prospects for scientific discovery and clinical applications by overcoming the three difficulties with PD data integration mentioned above. Prior to the screening of PD families for SNCA mutations, the gene that codes for synuclein alpha, PD was understood to be a sporadic and common non-genetic condition. With the discovery of more genetic risk factors, PD is now understood to be an illness with complex genetic, lifestyle, and environmental interactions, ranging from monogenic to polygenic inheritance.

Acknowledgement

None

Conflict of Interest

None

REFERENCES

- Yakunin E, Kisos H, Kulik W, Grigoletto J, Wanders RJ et al. (2014) The regulation of catalase activity by PPAR gamma is affected by alpha-synuclein. Ann Clin Transl Neurol 1: 145-159.

Indexed at, Crossref, Google Scholar

- Patel V, Kumar AK, Paul VK, Rao KD, Reddy KS et al. (2011) Universal health care in India: The time is right. The Lancet 377: 448-449.

Indexed at, Crossref, Google Scholar

- Schrag A, Barone P, Brown RG, Leentjens AF, McDonald WM et al. (2007) Depression rating scales in Parkinson’s disease: critique and recommendations. Mov Disord 22: 1077-1092.

Indexed at, Crossref, Google Scholar

- Pearce MM, Kopito RR (2017) Prion-like characteristics of polyglutamine-containing proteins. Cold Spring Harb Perspect Med 10: 1101.

Indexed at, Crossref, Google Scholar

- Ruottinen HM, Rinne UK (1996) Entacapone prolongs levodopa response in a one-month double blind study in parkinsonian patients with levodopa-related fluctuations. J Neurol Neurosurg Psychiatry 60: 36-40.

Indexed at, Crossref, Google Scholar

- Mochizuki H, Ishii N, Shiomi K, Nakazato M (2017) Clinical features and electrocardiogram parameters in Parkinson's disease. Neurol Int 9: 7356.

Indexed at, Crossref, Google Scholar

- Rochester L, Galna B, Lord S, Yarnall AJ, Morris R et al. (2017) Decrease in Abeta42 predicts dopa-resistant gait progression in early Parkinson disease. Neurology 88: 1501-1511.

Indexed at, Crossref, Google Scholar

- Chamuleau SAJ, Van Der Naald M, Climent AM, Kraaijeveld AO, Wever KE et al (2018) Translational Research in Cardiovascular Repair a Call for a Paradigm Shift. Circ Res 122: 310-318.

Indexed at, Crossref, Google Scholar

- Ayub M, Bayley H (2012) Individual rna base recognition in immobilized oligonucleotides using a protein nanopore. Nano Lett 12: 5637-5643.

Indexed at, Crossref, Google Scholar

- Tanha K, Pashazadeh AM, Pogue BW (2015) Review of biomedical Ä?erenkov luminescence imaging applications. Biomed Opt Express 6: 3053-3065.

Indexed at, Crossref, Google Scholar

Citation: Trifan A (2022) Parkinson’s Disease

by Translational Informatics. Transl Biomed,

Vol. 13 No. 6: 236.